Apparent Magnitude

Some astronomical objects and their apparent magnitudes from Earth.

Before telescopes, people looked at the sky and classified the objects they saw by their brightness. Hipparchus, a Greek mathematician, classified over 850 cosmic objects into six categories of brightness. Scientists later adopted the word magnitude, keeping and extending the scale developed by Hipparchus. The brightest stars were called first magnitude stars, the next brightest being second magnitude stars, etc. Today, we measure the brightness of an object using this same scale, but with much more precision and using a much larger scale. The scale is formatted so that the lower the magnitude the brighter the object, which means a star with a magnitude of -1 is brighter than a star with magnitude 2.

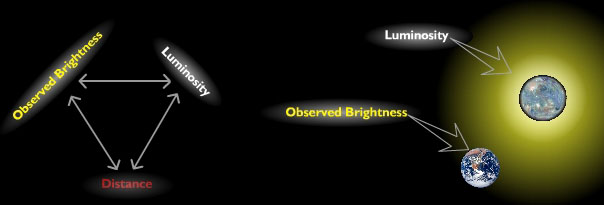

Luminosity, Distance, and Brightness are interrelated. Observed Brightness is what we see here on Earth, while Luminosity is the actual light energy produced by a star.

You probably know from direct experience that a light source seen from far away appears much dimmer than the same source viewed from up close. This is the basic idea that enables scientists to use light to measure astronomical distances. A measure of the energy emitted from a star (in the form of light) per unit time is called the star's luminosity. This value is independent of distance, hence astronomers treat it as a measure of the star's intrinsic brightness. Observed brightness, what we see, varies with the distance to the observer.

[1.5a] Sidequest: Absolute and Apparent Magnitude

[1.5a] Sidequest: Absolute and Apparent Magnitude

The Relation Between Brightness and Luminosity

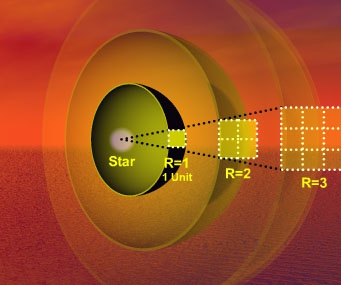

The brightness of a star is proportional to its luminosity divided by its distance from the observer squared. For this example at one unit of distance from the star the brightness is one, but at three units of distance from the star the brightness is nine times smaller.

As light from a star spreads out, its energy covers larger and larger areas causing the energy per unit area to decrease in an inverse square relationship. This means that doubling the distance actually cuts the energy by four. With more area to cover, the light from the star appears dimmer. We call "energy per unit area" brightness.

From our vantage point on Earth, it is relatively simple to measure the apparent magnitude of a distant star. Measuring luminosity on the other hand is incredibly difficult (one cannot simply send a probe out to a star many lightyears away). In fact measuring stellar distances is one of the most difficult ongoing problems in cosmology. Often scientists must utilize several different methods in combination to arrive at a reasonable estimate for the distance to a stellar object. Many of these methods make use of unique astronomical objects called standard candles.

[1.5b] Down the Rabbit Hole: Luminosity versus Observed Brightness

[1.5b] Down the Rabbit Hole: Luminosity versus Observed Brightness

Standard Candles

Standard Candles are used to calculate astronomical distances.Each of these candles have the same intrinsic luminosity, the only difference is the distance from the observer.

A standard candle is a general term for any class of objects that have the same intrinsic luminosity (produce the same amount of light energy per unit of time). Objects that astronomers have traditionally used as standard candles include the largest galaxies in clusters, type Ia supernovae, and particularly bright stars called cepheid variables. Since standard candles of the same type possess the same luminosity astronomers can calculate distance ratios simply by measuring the apparent magnitude of two candles, because distant candles will appear dimmer than closer candles according to the inverse square law.

These distant objects that we see give off light and consist of visible matter.

[1.5c] Down the Rabbit Hole: Standard Candles

[1.5c] Down the Rabbit Hole: Standard Candles

[1.5d] Cosmic Conundrums: Light

[1.5d] Cosmic Conundrums: Light